AWS EKS Capabilities: Fully Managed Argo CD — Setup with Terraform

A Software Product Engineer, Cloud enthusiast | Blogger | DevOps | SRE | Python Developer. I usually automate my day-to-day stuff and Blog my experience on challenging items.

Hello All,

If you've been running Argo CD on EKS, you know the drill — install the helm chart, manage the controllers, handle upgrades, configure SSO, worry about HA, and repeat for every cluster. It's a lot of operational overhead for a tool that's supposed to simplify your life.

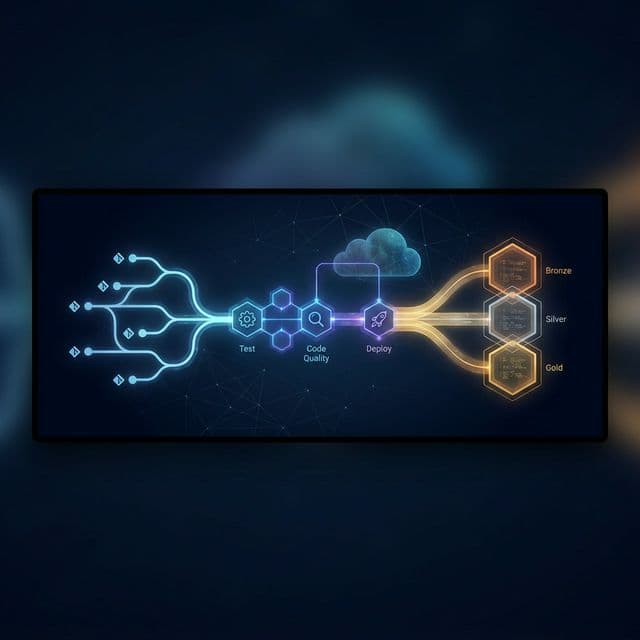

AWS recently introduced EKS Capabilities, and one of the first capabilities available is a fully managed Argo CD. This means AWS takes care of running, scaling, and upgrading Argo CD controllers in their own service accounts — outside of your cluster. You don't install anything on your worker nodes.

The Argo CD software runs in the AWS control plane, not on your worker nodes.

Sounds interesting? Let's walk through the setup using Terraform.

What Changes with EKS Capability for Argo CD?

Before this, self-managing Argo CD meant:

Installing and maintaining Argo CD controllers on your cluster

Configuring SSO separately (Dex, OIDC, etc.)

Managing HA, scaling, and upgrades yourself

Setting up IRSA or cross-account IAM for multi-cluster deployments

With EKS Capabilities, all of that is handled by AWS. The key highlights:

Argo CD runs in AWS-managed service accounts, not your worker nodes

Native integration with AWS Identity Center (formerly SSO) for user management — no more Dex configs

Simplified multi-cluster access using EKS Access Entries

Native integrations with ECR, Secrets Manager, and CodeConnections

User management is completely taken out of your hands and integrated with AWS Identity Center. If you've been struggling with Argo CD RBAC + SSO, this is a relief.

The Catch with Terraform

Though we have the terraform-aws-modules/eks module supporting this capability, it does not work completely out of the box for a fresh EKS setup. There are a few manual steps and dependencies you need to wire together.

In this blog, I'll walk through the setup and how to get it running quickly.

Step 1: Create the Argo CD Capability

Using the EKS capability sub-module from terraform-aws-modules/eks:

module "argocd" {

source = "terraform-aws-modules/eks/aws//modules/capability"

version = "~> 21.16"

name = "${local.cluster_name}-argocd"

cluster_name = module.eks.cluster_name

type = "ARGOCD"

configuration = {

argo_cd = {

aws_idc = {

idc_instance_arn = "arn:aws:sso:::instance/<YOUR_IDC_INSTANCE_ID>"

}

namespace = "argocd"

rbac_role_mapping = [{

role = "ADMIN"

identity = [{

id = data.aws_identitystore_group.argocd_admin.group_id

type = "SSO_GROUP"

}]

}]

}

}

iam_policy_statements = {

ECRRead = {

actions = [

"ecr:GetAuthorizationToken",

"ecr:BatchCheckLayerAvailability",

"ecr:GetDownloadUrlForLayer",

"ecr:BatchGetImage",

]

resources = ["*"]

}

}

tags = local.additional_tags

}

A few things to note here:

idc_instance_arn— this is your AWS Identity Center instance ARN. Make sure Identity Center is configured in your account before proceeding.rbac_role_mapping— maps your Identity Center groups to Argo CD RBAC roles (ADMIN, VIEWER). This replaces the traditional Argo CD RBAC ConfigMap approach.The

iam_policy_statementsblock grants ECR read access so Argo CD can pull images and Helm charts from your private registries.

Step 2: Configure EKS Access Entry for Argo CD

This is a critical step. The capability needs cluster-level access to manage deployments. We wire this up using EKS Access Entries:

resource "aws_eks_access_entry" "argocd" {

cluster_name = module.eks.cluster_name

principal_arn = module.argocd.iam_role_arn

type = "STANDARD"

}

resource "aws_eks_access_policy_association" "argocd" {

cluster_name = module.eks.cluster_name

principal_arn = module.argocd.iam_role_arn

policy_arn = "arn:aws:eks::aws:cluster-access-policy/AmazonEKSClusterAdminPolicy"

access_scope {

type = "cluster"

}

depends_on = [aws_eks_access_entry.argocd]

}

The

AmazonEKSClusterAdminPolicyprovides full cluster-admin access. This is fine for getting started, but for production consider scoping down with custom Kubernetes RBAC bindings.

If you've read my earlier blog on EKS Access Entries, this pattern should feel familiar.

Step 3: Register Your Cluster (Mandatory!)

Here's where most people get stuck. The Argo CD capability does not automatically register the local cluster. You must explicitly register it as a deployment target.

This is done by creating a Kubernetes Secret in the argocd namespace:

apiVersion: v1

kind: Secret

metadata:

name: local-cluster

namespace: argocd

labels:

argocd.argoproj.io/secret-type: cluster

stringData:

name: in-cluster

server: arn:aws:eks:<REGION>:<ACCOUNT_ID>:cluster/<CLUSTER_NAME>

project: default

Points to note:

Use the EKS cluster ARN in the

serverfield, not the Kubernetes API server URL. The managed capability requires ARNs to identify clusters.kubernetes.default.svcis not supported here.This step depends on the access policy association from Step 2 being completed first.

Apply it:

kubectl apply -f local-cluster.yaml

Once registered, the cluster will show in an Unknown connection state until you create your first application — that's expected behavior.

Step 4: Deploy Your Applications

Now you're ready to create Argo CD Applications and Projects. Here's a quick example:

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: my-app

namespace: argocd

spec:

project: default

source:

repoURL: https://github.com/<YOUR_ORG>/<YOUR_REPO>.git

targetRevision: HEAD

path: k8s/

destination:

name: in-cluster

namespace: my-app

syncPolicy:

automated:

prune: true

selfHeal: true

Use destination.name with the cluster name you registered (like in-cluster). The destination.server field also works with EKS cluster ARNs, but using names is cleaner.

Things to Keep in Mind

AWS Identity Center is mandatory — local users are not supported. Make sure IDC is configured with the right groups before setting up the capability.

Cluster registration is not automatic — don't skip Step 3, or your deployments won't have a target.

Access Entry dependency — the cluster secret registration depends on the access policy being in place. Terraform

depends_onis your friend here.Production RBAC — scope down from

AmazonEKSClusterAdminPolicyto least-privilege custom roles for production workloads.

This is a solid step forward from AWS in reducing the operational burden of running GitOps tooling. No more managing Argo CD upgrades, HA configs, or SSO integrations manually. It just works as part of your EKS cluster lifecycle.

Happy GitOps-ing!

References:

https://docs.aws.amazon.com/eks/latest/userguide/argocd.html

https://docs.aws.amazon.com/eks/latest/userguide/argocd-register-clusters.html