K8s — Managing Multiple Ingress- Pods -> Service -> Nginx ingress -> AWS ingress — OpsInsights

A Software Product Engineer, Cloud enthusiast | Blogger | DevOps | SRE | Python Developer. I usually automate my day-to-day stuff and Blog my experience on challenging items.

There is no stable infrastructure, that is un-disturbed right from the initial design.

if you disagree; at least, my infra is not :p

Yes, we adopt change and always look for improvement. This is not going to be a technical aspect of the illustration, we will see scripting and HOW-To stuff in another blog.

Let us consider a simple Django application. Django is the most famous framework for python like Flask.

Some of the main Pros to prefer Django

Running a python application is quite easy as it does,

Bingo! this solution best works only for the development environment and it does not support multi-threading, the same cannot be used for production instances.

We need a wrapper i.e, another layer/gateway interface that helps to multithread and to manage the underlying python threads.

So comes uwsgi, the most famous application server interface which supports many frameworks, but was initially developed to support python.

Django with uwsgi server with multi-threading workers enabled (by default multithreading is off by python GIL).

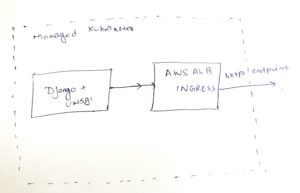

Since we had the uwsgi server in Django, I prefer not to have Nginx and use AWS ingress controller in front, which in turn creates application load balancers. All the configs, SSL offloading everything was done ALB level.

the setup looks as below:

Problems in the above setup:

Considering the security and performance of the application uwsgi workers were configured to restart every 60 seconds. This avoids any dead service calls; the number 60s was arrived by benchmarking the application, provided no service call waits for more than 60s.

Okay, let us assume we have good traffic now, 1000 requests per minute. Every 60 seconds the worker threads were restarted; when any of the requests are sent to the worker at the time of restarting, it returns 502. This keeps happening randomly in the application.

Approximately it is faced by 5% of the overall requests. Thus it is identified that AWS ALB is not mature enough to route traffic healthy uwsgi workers inside a container.

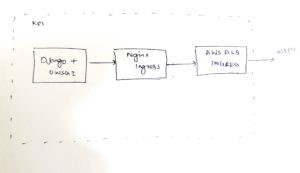

**Nginx **handles this by default; so the solution is to introduce an Nginx ingress in front of aws ingress controller. I need

AWS ALB since I have configured other settings like WAF in L7 in ALB.

After updating the setup as described above, we found a drastic change in the requests and 502 is not a nightmare anymore.

- Launched Nginx ingress controller with type service: NodePort so that it will not launch any unnecessary load balancers. — Mapped aws ingress to listen to Nginx ingress service endpoints

We will discuss how to do this setup in detail in our next blog.

More on web server gateway: https://www.python.org/dev/peps/pep-3333/

Originally published at https://opsinsights.dev on April 16, 2020.