Scaling Stateful Applications in Kubernetes: EKS + EFS.

A Software Product Engineer, Cloud enthusiast | Blogger | DevOps | SRE | Python Developer. I usually automate my day-to-day stuff and Blog my experience on challenging items.

Motivation:

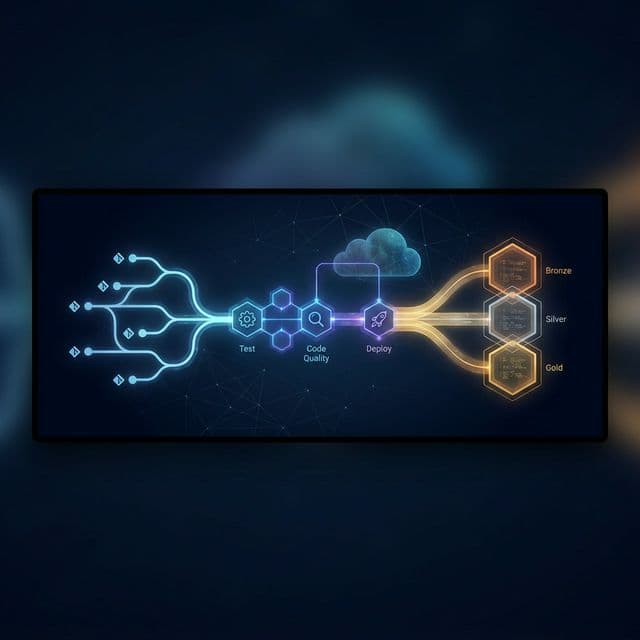

Kubernetes is widely recognized for its ability to manage containerized applications at scale. However, when it comes to managing stateful applications, certain considerations must be addressed. This article explores the challenges faced in scaling stateful applications and presents solutions for seamless scalability.

Let's consider a scenario where we have an application consisting of two microservices that communicate and require a shared volume to support specific processing operations.

Additionally, the data generated by these services need to be retained for further analysis.

Requirement:

To ensure scalability among worker nodes, it is necessary to implement a solution that meets the following criteria:

- The pods must have access to a shared persistent volume.

Create EFS

We are not going into the details of creating EFS. Assuming we have an existing EFS already.

my efs mount id: fs-582a0sdat

Make sure to create an access point. Access points are mount paths in EFS where the data should be accessed.

my efs access point /tmp/poc-efs

Deploy EFS driver in the cluster

Checkout the official document from AWS for EFS driver:

https://docs.aws.amazon.com/eks/latest/userguide/efs-csi.html

Create Storageclass

This is to define a storage class that tells the k8s to this resource to provision volumes. There are a lot of provisioners like EBS, GCE PD, EFS etc.

https://kubernetes.io/docs/concepts/storage/storage-classes/#provisioner

We are using EFS provisioner for our usecase.

storageClass.yaml

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

name: efs-sc

provisioner: efs.csi.aws.com

parameters:

provisioningMode: efs-ap

fileSystemId: fs-582a0sdat

directoryPerms: "700"

gidRangeStart: "1000" # optional

gidRangeEnd: "2000" # optional

basePath: "/dynamic_provisioning" # optional

kubectl apply -f storageclass.yaml

Verify the installation.

kubectl get pods -n kube-system | grep efs-csi-controller

Create a persistent volume and persistent volume claim.

apiVersion: v1

kind: PersistentVolume

metadata:

name: efs-pv1

spec:

capacity:

storage: 5Gi

volumeMode: Filesystem

accessModes:

- ReadWriteMany

persistentVolumeReclaimPolicy: Retain

storageClassName: efs-sc

mountOptions:

- tls

csi:

driver: efs.csi.aws.com

volumeHandle: fs-582a0sdat:/tmp/poc-efs

-----------

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: efs-claim

spec:

accessModes:

- ReadWriteMany

storageClassName: efs-sc

resources:

requests:

storage: 5Gi

Verify the status

kubectl get pv,pvc

Deploy Application

Let's use it in our deployment spec

deployment.yaml

apiVersion: v1

kind: Deployment

metadata:

name: efs-app

labels:

app: efs-app

spec:

replicas: 3

selector:

matchLabels:

app: efs-app

containers:

- name: myapp

image: centos

command: ["/bin/sh"]

args: ["-c", "while true; do echo $(date -u) >> /data/out; sleep 5; done"]

volumeMounts:

- name: persistent-storage

mountPath: /data

volumes:

- name: persistent-storage

persistentVolumeClaim:

claimName: efs-claim

kubectl apply -f deployment.yaml

Now bash into the pods and the mount path can be accessed across the pods.

Thank you, Happy Provisioning!

References:

https://docs.aws.amazon.com/eks/latest/userguide/efs-csi.html